However that doesn't mean there aren't circumstances where they aren't legal to scuff. If you're interested interested in get data ditched for you, you can look into our web scraping solutions ParseHub And also. You can schedule a cost-free telephone call as well as get a FREE Data Export Test with no dedications.

- The data accumulated through web scraping should be made use of sensibly and fairly.

- Despite the fact that web scuffing has numerous productive usages, as holds true with several technologies, cyber bad guys have likewise found ways of abusing it

- This is why a multitude of the globe's legendary business depend on ScrapeHero for its data.

- If you're interested curious about obtain information scrapped for you, you can have a look at our web scuffing solutions ParseHub Plus.

If you're a host wanting to manage web scrapers, look no more than Kinsta's took care of holding strategies. You can restrict robots as well as guard valuable data as well as resources with many accessibility control tools readily available. However, it's not always so simple-- especially when performing web scuffing on a larger scale. One of the largest challenges of web scraping is keeping your scraper updated as web sites transform designs or take on anti-scraping procedures. While that's not also difficult if you're only scuffing a couple of internet sites each time, scuffing even more can rapidly come to be a hassle.

Lawful And Also Honest Facets And Information Protection

Internet scraping is made use of by practically every industry to extract and also examine information from the web. Companies utilize accumulated data to come up with brand-new service techniques and items. Unless you are taking actions to shield your personal privacy, Browse this site firms are using your information to earn money. Premium web scraped information gotten in big volumes can be really valuable for firms in evaluating consumer patterns as well as understanding which instructions the firm must move in the future. Parsehub is a cost-free online tool (to be clear, this one's not a Python collection) that makes it easy to scrape on-line data. The only catch is that for complete performance you'll require to pay.

Staying Under the Radar: How Residential Proxies Can Protect Your ... - Cyber Kendra

Staying Under the Radar: How Residential Proxies Can Protect Your ....

Posted: Tue, 22 Aug 2023 13:46:20 GMT [source]

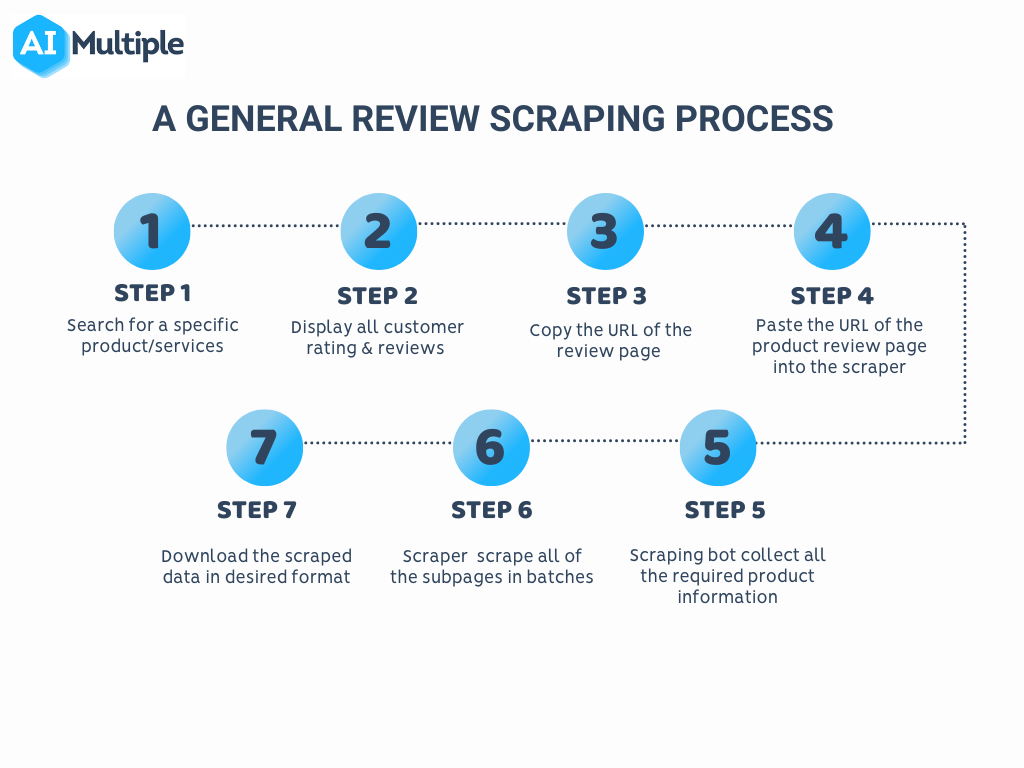

Web scraping is the process of bring data from sites to be processed later. Commonly, internet scraping is executed by semi-automated software application that downloads web pages as well as removes particular, valuable details. You can parse, reformat, or store the info in a data source. In the e-commerce industry-- to gather product information, monitor rates, and also assess customer reviews. This information can be utilized to enhance prices methods, boost product summaries, as well as recognize prominent products.

Best Internet Scraping Services Contrasted

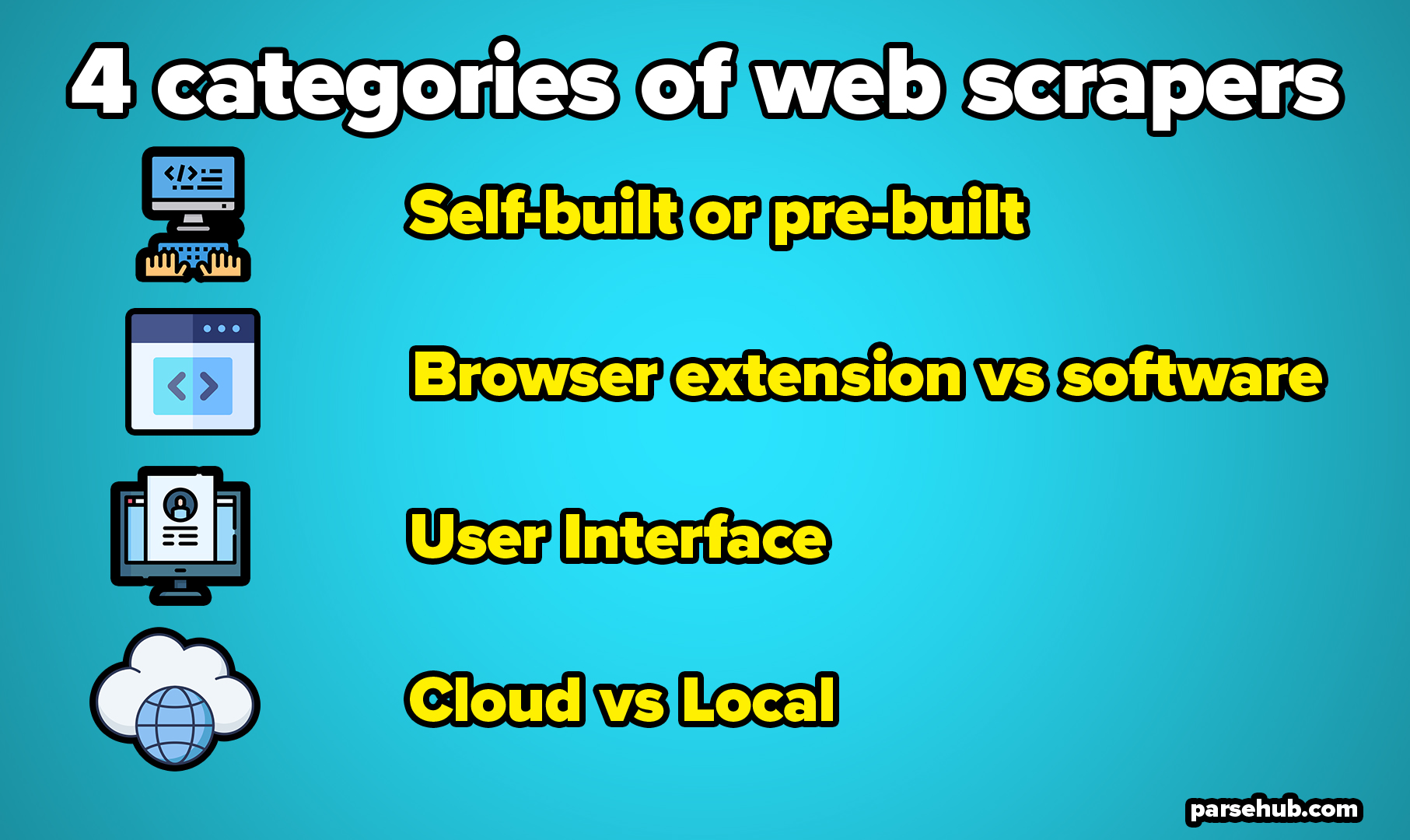

Also if you're collecting the very same kind of information from each, each web site could call for a different extraction method. Rather than by hand going through different interior processes on each website, you could use an internet scraper to do it instantly. Ever before intended to compare costs from numerous sites all at once? Or possibly instantly draw out a collection of blog posts from your preferred blog?

New Hires Announced at Valley First, STCU, Corporate Central & Wildfire CU - Credit Union Times

New Hires Announced at Valley First, STCU, Corporate Central & Wildfire CU.

Posted: Tue, 22 Aug 2023 13:00:14 GMT [source]

In reality, though, the procedure isn't executed just as soon as, yet plenty of times. This features its own swathe of issues that need solving. As an example, badly coded scrapers may send out a lot of HTTP demands, which can collapse a website. Every website also has various rules wherefore bots can and can not do.

Bring your information collection process to the following level from $50/month + BARREL. To prevent internet scratching, internet site operators can take a variety of various actions. The documents robots.txt is used to obstruct online search engine bots, for instance.